Building an Internet of Things (IoT) product that survives first contact with reality is a test of engineering discipline. Get it wrong, and you’re saddled with stalled prototypes, budget overruns, and security flaws that can derail a program before it reaches the market. The stakes are high; failing to master the interplay between firmware, cloud, and manufacturing readiness is a recipe for expensive, unscalable systems that fail in the field.

This guide is for the operators accountable for shipping complex connected systems: VPs of Engineering, CTOs, Program Managers, and Lead Engineers. It's for teams who know a bench-top prototype is miles away from a mass-produced product. This guide is not for hobbyists or teams focused solely on mobile app development. We will focus on the strategic decisions that determine reliability, cost, and time-to-market.

This guide provides an operational framework to:

- Make critical architecture trade-offs between edge and cloud processing.

- Define a robust device-to-cloud contract to enable parallel development.

- Implement a verification and security strategy that de-risks production.

Architecting Your IoT System for Reliability and Scale

A great IoT product isn't built on clever hardware or a slick app; it’s built on a rock-solid, scalable architecture. This blueprint defines how your system performs under pressure, its total cost of ownership, and whether it can adapt to future customer demands. A flawed architecture creates a mountain of technical debt that grinds development to a halt and makes maintenance a painful ordeal.

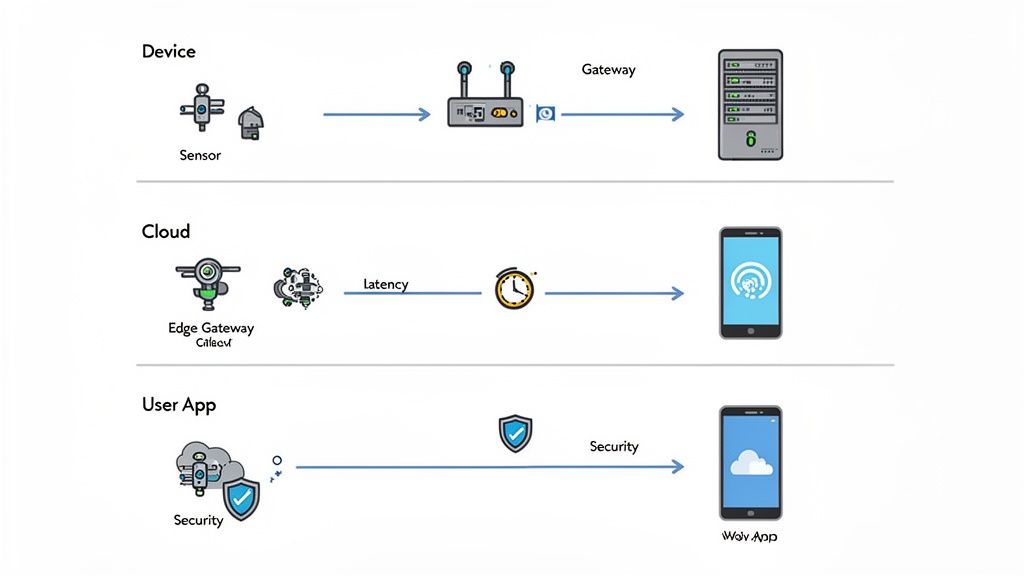

Effective internet of things software development begins with a clear system map. Data flows across four distinct layers:

- Device Layer: The physical hardware and its embedded firmware—the "thing" responsible for sensing, actuation, and initial communication.

- Edge Layer: An optional but often critical local gateway that can perform on-site data processing, filtering, and protocol translation, reducing cloud dependency.

- Cloud Layer: The central backend that handles device management, data storage, large-scale processing, and analytics.

- Application Layer: The user-facing interface, typically a web dashboard or mobile app, used for control and data visualization.

Edge vs. Cloud Processing: The Central Architectural Decision

One of the first and most critical architectural decisions is where data gets processed. This choice has cascading effects on latency, operating costs, reliability, and security. There is no single "right" answer—only the best fit for your specific operational and business constraints. Moving computation closer to the device is a strategic move that dictates your system's real-time performance and its entire cost structure.

Real-World Scenario: Medical vs. Agricultural IoT

A medical device monitoring patient vitals cannot tolerate the latency of a round trip to the cloud; an anomaly requires an instant local alert. For this use case, edge processing is non-negotiable for patient safety.

Conversely, a network of agricultural sensors tracking soil moisture across thousands of acres can prioritize cloud processing. This keeps the per-device hardware cost and power consumption low, which is paramount for a large-scale deployment where sub-second latency is not a driver.

The core architectural question isn't if you should process data, but where. Getting this wrong can build a system that's too slow to be useful, too expensive to scale, or too fragile to survive in the real world.

The choice between edge and cloud computing involves a series of critical trade-offs.

IoT Architecture Decision Trade-Offs

| Decision Criteria | Edge Computing | Cloud Computing |

|---|---|---|

| Latency | Very Low. Processing happens locally, enabling near-instantaneous responses for time-critical actions. | Higher. Data must travel to the cloud and back, introducing delays unsuitable for real-time control. |

| Bandwidth Cost | Lower. Only sends processed data or summaries to the cloud, significantly reducing data transmission costs. | Higher. Raw data from all devices is sent to the cloud, which can be expensive for large fleets. |

| Reliability | Higher. Can operate autonomously even if the internet connection to the cloud is lost. | Lower. Dependent on a constant, stable internet connection to function. No connection, no processing. |

| Device Cost | Higher. Edge gateways require more processing power and memory, increasing hardware costs. | Lower. End devices can be simpler and less powerful ("dumb sensors"), reducing per-unit hardware costs. |

| Scalability | Complex. Scaling requires deploying more physical edge hardware. | Simpler. Cloud resources can be scaled up or down on demand with relative ease. |

| Security | Distributed Risk. A breach may be contained to a local edge node, but creates a larger physical attack surface. | Centralized Risk. A single cloud breach could compromise the entire system, but physical security is managed by the provider. |

Many modern systems use a hybrid approach, performing time-sensitive tasks at the edge while sending aggregated insights to the cloud for long-term analysis.

Choosing Your Backend Architecture

Another pivotal decision is your cloud backend structure. While a monolithic architecture might seem simpler initially, modern IoT systems almost always benefit from a microservices approach for long-term viability. A monolith bundles all server-side logic into a single application, becoming a nightmare to update and scale.

A microservices architecture breaks the backend into a collection of small, independent services—one for device authentication, another for data ingestion, another for user profiles. This modularity is a game-changer for complex IoT deployments:

- Improved Scalability: Scale only the services you need. If data ingestion is a bottleneck, you can allocate more resources to that service alone.

- Enhanced Resilience: The failure of one microservice does not take down the entire system. This fault containment dramatically improves product reliability.

- Technology Flexibility: Teams can use the best language or framework for each specific job, fostering innovation and performance.

For any product needing high reliability, a microservices approach is the superior long-term bet. It aligns perfectly with the principles of designing for testability and fault containment—hallmarks of high-performing teams that ship complex products. As you map out your solution, our insights on IoT applications in fleet management can help you grasp these scaling challenges.

Navigating the IoT Tech Stack and Protocols

Choosing the right technologies is a foundational decision in any internet of things software development program. The wrong choice can introduce crippling limitations, runaway operational costs, and gaping security holes before a single unit ships. This isn't about chasing trends; it's about making deliberate engineering choices that align with your product's real-world operational constraints.

High-performing teams avoid unforced errors by rigorously defining requirements first and only then selecting the technologies to meet them. This discipline ensures the tech stack serves the product, not the other way around.

Choosing the Right IoT Connectivity Protocol

The first major decision is connectivity—how devices phone home. The choice demands a clear understanding of the operational environment, power budget, and data throughput requirements.

Short-Range Wireless: Ideal for devices communicating with a local gateway.

- Wi-Fi: Offers high-speed data transfer, making it suitable for video streaming. However, its high power consumption is a significant drawback for battery-powered devices. See our guide on the pros and cons of Wi-Fi for IoT applications.

- Bluetooth Low Energy (BLE): The champion for low-power, short-range communication. Perfect for wearables and sensors that send small data packets to a nearby smartphone or gateway.

Long-Range Wireless (LPWAN): Engineered for devices scattered over large areas, sending small amounts of data with minimal power draw.

- LoRaWAN: Excels at long-range communication (often kilometers) with extremely low power consumption. A natural fit for agricultural sensors, smart city assets, and trackers that must operate for years on a single battery.

- Cellular IoT (LTE-M/NB-IoT): Leverages existing cellular networks for reliable, secure connectivity over vast distances. It balances the low data rates of LoRaWAN with the higher power consumption of traditional cellular, making it ideal for mobile assets like fleet trackers or smart utility meters.

Selecting Your Messaging Protocol

Once connected, a device needs a language to speak with the cloud. While HTTP is familiar, its overhead makes it a poor choice for resource-constrained IoT devices. The primary contenders are MQTT and CoAP.

For high-reliability systems, especially those dealing with intermittent network connections, MQTT is almost always the superior choice. Its lightweight design and built-in quality-of-service levels make it incredibly resilient where a protocol like HTTP would simply fail.

This comparison highlights the critical trade-offs:

| Protocol | Key Characteristics | Best For |

|---|---|---|

| MQTT | Publish/subscribe model with a central broker. Lightweight header, supports multiple Quality of Service (QoS) levels to ensure message delivery. | Unreliable or intermittent networks (e.g., factory floors, remote assets). Ideal for many-to-many communication and low-power devices. |

| CoAP | Client-server model designed to work like HTTP but for constrained devices (UDP-based). Optimized for one-to-one communication. | Very resource-constrained devices where even MQTT's overhead is too much. Often used in building automation and smart lighting. |

| HTTP | Verbose, request-response protocol. High overhead and power consumption compared to MQTT/CoAP. | Devices with ample power and reliable network connections, or for simple, non-critical data transfer where familiarity is prioritized. |

Real-World Scenario: Industrial Motor Monitoring

We worked with an industrial automation client needing to monitor hundreds of motors to predict maintenance. The factory floor was an RF-hostile environment with intermittent Wi-Fi coverage due to heavy machinery.

- Problem: Constant, reliable data streams were needed from every motor to feed a predictive maintenance algorithm. Data loss was unacceptable, but network connectivity was spotty.

- Solution:

- Connectivity: The team chose BLE sensors on each motor, reporting to local gateways. The gateways used hardwired Ethernet for a robust data backhaul to the cloud. This hybrid approach solved the "last mile" connectivity problem without relying on unreliable Wi-Fi.

- Messaging: They chose MQTT with a Quality of Service level of 1 ("at least once delivery"). This was critical. When a gateway temporarily lost connection, the MQTT broker queued messages and delivered them upon reconnection, preventing any loss of vital maintenance data.

- Outcome: This requirements-driven approach ensured a reliable system that directly reduced expensive, unplanned downtime, tying the technical choice to a clear business impact.

Mastering the IoT Development Lifecycle

The biggest point of failure in most IoT projects is not a technical bug but the chasm between hardware, firmware, and cloud software teams. When these groups work in silos, integration becomes a nightmare, schedules slip, and prototypes prove impossible to manufacture at scale. Handoffs create bottlenecks where critical details are lost.

High-performing teams solve this by adopting a unified development model, often with a single technical owner responsible for the end-to-end system. This breaks down silos and forces collaboration, slashing integration risk. The process becomes a coordinated, parallel effort, not a linear relay race.

Defining the Contract Between Firmware and Cloud

The key to enabling parallel development is establishing a clear, machine-readable "contract" between the device and the cloud. This contract is the API and data model that defines every message, data point, and command before significant code is written.

Tools like Protocol Buffers (Protobuf) or JSON Schema are ideal for this. They create a version-controlled, language-agnostic definition of all data structures. Once the contract is established:

- The firmware team can build and test code to generate and parse these messages on the device.

- The backend team can build and test cloud services using mock data that conforms to the contract.

This concurrent workflow eliminates the classic bottleneck where one team waits for the other. Both sides build against a stable, shared interface, ensuring their components will integrate seamlessly.

By treating the device-to-cloud API as a formal, version-controlled contract, you transform integration from a late-stage gamble into an early, testable milestone. This is a hallmark of mature internet of things software development organizations.

Designing for Reliability and Field Operations

A successful development lifecycle plans for the entire product life, especially the challenges of managing devices in the field. This is where designing for testability and maintainability provides a massive return on investment.

Over-the-Air (OTA) Updates

Bricking a device in the field is a catastrophic failure that can destroy customer trust and trigger expensive recalls. A robust OTA strategy is a requirement, not a luxury.

Key elements of a reliable OTA system include:

- Fail-Safe Mechanisms: The device must be able to roll back to its last known good firmware if an update fails due to power loss, a corrupted download, or any other issue.

- Compatibility Checks: Before installation, the device must verify that the update is compatible with its hardware revision and current software version.

- Bandwidth Efficiency: Updates should be delivered as small delta updates (incremental patches) rather than full firmware images to minimize data costs and transmission time, especially over cellular networks.

Embedded Testability & Manufacturing Firmware

Discovering your product is difficult to test on the factory floor is a recipe for disaster. From the first board revision, the design must include hooks for manufacturing. This "design for testability" (DFT) is a core discipline of high-performing teams. This typically means creating a dedicated manufacturing firmware that can:

- Run automated hardware diagnostics (e.g., test RAM, flash, peripherals).

- Report sensor calibration data to the factory line.

- Securely provision unique device IDs and security credentials.

This DFT focus, embedded in the firmware from day one, is what separates a smooth production ramp from a chaotic one. It closes the gap between engineering and manufacturing, ensuring the product you designed is the one you can build.

Implementing Robust Security and Compliance by Design

In IoT, security cannot be bolted on at the end. It must be woven into the fabric of the product from the first schematic. Forgetting this is a surefire path to catastrophic failures, costly redesigns, and a complete loss of market trust. A weak security posture isn't just a technical problem; it's an existential business threat.

IoT cyberattacks skyrocketed to 112 million incidents in 2022, an 87% jump from the previous year. You can learn more about the scale of IoT security challenges from this recent report. This proves that internet of things software development must be built on a foundation of security from day one. A solid security framework creates a chain of trust from the silicon to the cloud, and that chain is only as strong as its weakest link.

Establishing a Hardware Root of Trust

True device security begins with the hardware. You cannot build a secure system on insecure hardware. A hardware root of trust (RoT) is a non-negotiable requirement for any serious IoT product. This is achieved through two critical practices:

- Secure Boot: When the device powers on, an immutable piece of code verifies the cryptographic signature of the main firmware. If the signature is valid, the device boots. If not, it refuses to start, blocking unauthorized code.

- Hardware-Based Key Storage: Storing cryptographic keys in software is like leaving your house key under the doormat. A dedicated secure element (SE) or Trusted Platform Module (TPM) acts as a hardware vault, physically isolating private keys and certificates from software-based attacks and physical tampering.

A device lacking a secure boot process or hardware key storage is fundamentally untrustworthy. This is a massive red flag indicating a weak security posture that will inevitably lead to future vulnerabilities.

Protecting Data End-to-End

Once the device identity is secure, the next mission is to protect its data. This means implementing end-to-end encryption, covering data both in transit and at rest.

- Data in Transit: All communication between the device, edge, and cloud must be encrypted using a proven protocol like Transport Layer Security (TLS). This prevents eavesdropping and man-in-the-middle attacks.

- Data at Rest: Data stored on the device's flash memory or in a cloud database must also be encrypted. This ensures that even if an attacker gains physical access to the device or breaches a server, the data remains unreadable.

Given that most IoT solutions rely on the cloud, understanding the unique risks is vital. The top security challenges in cloud computing can undermine even experienced teams.

Security's Role in Demonstrating Compliance

For teams in regulated industries, these technical security decisions form the basis of compliance. Designing for security from the start is how you build your evidence for auditors. Our article on security in embedded systems explores these concepts further.

- Medical Devices (IEC 62304, HIPAA): Strong access controls, end-to-end encryption, and detailed audit logs are not just features; they are direct requirements for protecting patient data and ensuring device safety.

- Industrial Systems (NIST Cybersecurity Framework): Practices like secure boot and unique device identity management map directly to the NIST framework’s core functions of "Protect" and "Detect."

Postponing security means you are not just accumulating technical debt; you are engineering a product that is non-compliant by design. A security-first mindset is the fastest path to a reliable, compliant, and trustworthy product.

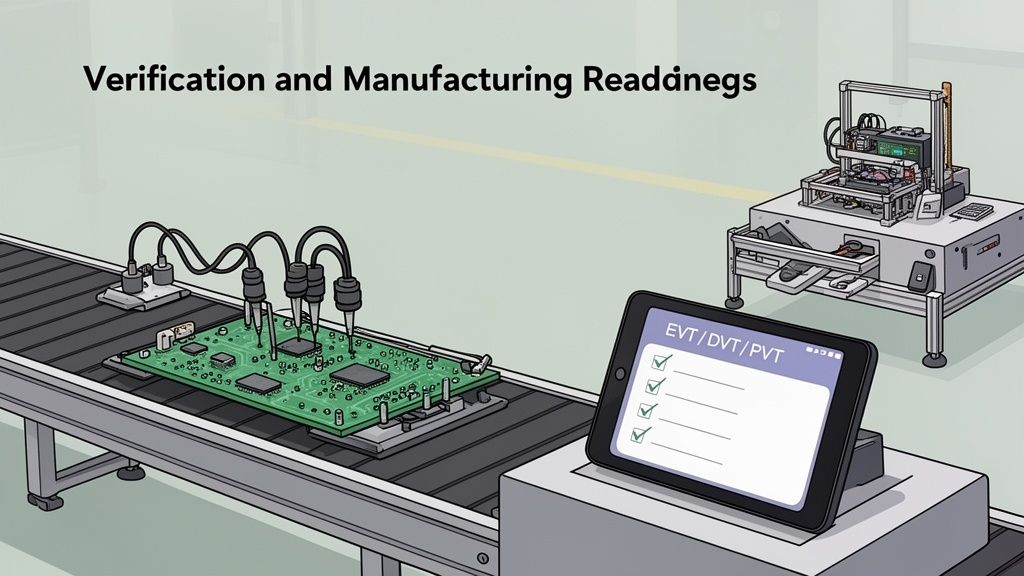

Ensuring Verification And Manufacturing Readiness

Getting a single prototype to work is miles away from shipping thousands of reliable products. The gap between a functional unit on an engineer’s bench and a high-yield product from an assembly line is where countless IoT programs fail. This is where a disciplined verification strategy and a relentless focus on Design for Manufacturability (DFM) and Design for Testability (DFT) are critical.

Without this rigor, teams are ambushed by late-stage discoveries that force costly rework and cause factory yields to plummet. High-performing teams treat production readiness as an engineering challenge to be solved from day one of the internet of things software development process.

Structuring Your IoT Verification Strategy

A robust verification plan is a structured, multi-stage process designed to catch issues early when they are cheapest to fix. This ensures the product is reliable from firmware to cloud.

A comprehensive verification strategy is the backbone of a reliable IoT system. The following checklist outlines key activities for each stage.

IoT Verification Strategy Checklist

| Verification Stage | Key Activities | Primary Goal |

|---|---|---|

| Firmware Unit & Integration | • Automated unit tests for each module • Tests for inter-module communication on target hardware | Verify foundational firmware logic and ensure modules work together correctly. |

| Hardware-in-the-Loop (HIL) | • Run firmware on production hardware • Simulate external inputs/outputs (sensors, network) • Automate edge-case testing (e.g., sensor failure, network loss) | Stress-test the device in a controlled, repeatable environment against a huge range of real-world scenarios. |

| End-to-End System Test | • Test the full data path: device -> gateway -> backend • Validate command-and-control loops • Simulate realistic network conditions and load | Confirm the entire system operates as a cohesive whole under real-world conditions, from device to data center and back. |

This systematic approach creates a testing framework that provides data-driven confidence for making critical program decisions and gates.

Navigating The EVT, DVT, and PVT Gates

The journey from prototype to mass production is formally managed through a series of gates: Engineering Validation Test (EVT), Design Validation Test (DVT), and Production Validation Test (PVT). Each gate has clear entry and exit criteria tied to specific business and technical goals, acting as non-negotiable quality checkpoints.

- EVT: Asks, "Does the core design work?" The goal is to prove the device meets key functional requirements and retire major technical risks.

- DVT: Focuses on reliability. The design is frozen, and hardware undergoes intense environmental, stress, and regulatory testing to uncover weaknesses before committing to expensive production tooling.

- PVT: With the design locked, the focus shifts to the factory. Can you build the product reliably, at scale, and within cost targets using the final production line and processes?

The Power Of Design For Testability (DFT)

The most powerful lever for reducing manufacturing costs and improving factory yield is Design for Testability (DFT). This is the practice of designing hardware from day one with the factory test process in mind.

A design that is difficult to test is, by definition, a design that is difficult to build. Integrating DFT principles early isn't a "nice-to-have"; it's a direct investment in a smoother, more predictable production ramp.

Key DFT elements that cannot be ignored include:

- Accessible Test Points: Physical access points on the PCB that allow automated test fixtures to probe critical signals like power rails, clocks, and communication lines, instantly verifying board integrity.

- Boundary Scan (JTAG): A JTAG port provides a direct interface for testing digital logic and programming flash memory on the factory floor, which is essential for complex boards.

- Manufacturing Firmware: A specialized firmware build loaded at the factory to run automated diagnostics, program unique data (serial numbers, security keys), and perform a full hardware health check on every unit before shipment.

Baking these principles into your design dramatically reduces the risk of expensive surprises on the factory floor, ensuring a more efficient ramp to mass production.

Frequently Asked Questions About IoT Development

Navigating an IoT program means fielding high-stakes questions from leadership. Here are direct, operationally-focused answers to common challenges in internet of things software development.

What Is the Biggest Hidden Cost in an IoT Project?

The single biggest hidden cost is the long-term operational expense of maintaining a deployed fleet of devices. Teams consistently underestimate the effort and infrastructure required to manage firmware, deploy security patches, and monitor device health over a 5- to 10-year product lifespan.

This long-tail cost includes:

- Over-the-Air (OTA) Infrastructure: The servers, bandwidth, and platforms needed to reliably deliver every update.

- Security Response: Dedicated engineering hours to investigate vulnerabilities, develop and test patches, and deploy them across thousands of devices.

- Device Management: The operational grind of tracking device status, diagnosing field failures, and handling RMA (Return Merchandise Authorization) processes.

Failing to budget for a robust device management platform and a fail-safe OTA update mechanism from day one is a classic—and costly—mistake. It turns planned maintenance into a series of expensive, reactive fire drills.

When Should We Move from a Prototype to a Production Design?

The transition from a prototype to a production-intent design should be triggered the moment you have validated two critical milestones during the EVT (Engineering Validation Test) phase:

- Core product-market fit is confirmed with a functional prototype in users' hands.

- Key technical risks have been retired (e.g., the core sensor technology is proven reliable and accurate).

A prototype is built to prove a concept. A production design, which kicks off the DVT (Design Validation Test) phase, is engineered for reliability, cost-optimization, and manufacturability at scale. Sticking with the prototype design for too long is a trap. It leads to attempts to "productionize" a benchtop device that was never designed for the rigors of mass production, triggering massive rework and schedule delays.

Should We Build Our Own IoT Platform or Use a Cloud Service?

For the vast majority of companies, using an established cloud service like AWS IoT, Azure IoT, or Google Cloud IoT is the correct strategic move. These platforms provide pre-built, scalable infrastructure for device management, data ingestion, security, and analytics.

Using a managed service dramatically reduces time-to-market and technical risk. It allows your team to focus its limited resources on building the unique features of your product, not on reinventing commodity cloud infrastructure.

Building a custom IoT platform should only be considered under specific conditions:

- Extreme Scale: Your device fleet will be so massive (millions of units) that the per-device cost of public clouds becomes prohibitive.

- Unique Regulatory or Security Needs: You operate in a domain with stringent requirements that cannot be met by off-the-shelf services.

For most IoT programs, the "build vs. buy" decision is clear. Focus your engineering resources on what makes your product unique.

At Sheridan Technologies, we help engineering leaders navigate these complex decisions to de-risk programs and accelerate the path from prototype to production. If you are facing architectural, manufacturing, or verification challenges in your IoT project, consider requesting an expert Architecture Consult to ensure your strategy is built for scale.