It’s a classic, high-stakes mistake in product development. Your team delivers a product that perfectly matches every line in the spec sheet, but it fails spectacularly in the hands of the customer. You built the product right, but you didn't build the right product. This is the costly confusion between design verification and design validation. For engineering leaders, confusing the two is a fast track to blown schedules, costly recalls, and products that are dead on arrival in the market.

This guide is for the technically literate, time-constrained decision-makers—VPs of Engineering, CTOs, program managers, and lead engineers—who are responsible for de-risking complex hardware programs. We will move beyond simple definitions to provide an operational framework for integrating both disciplines into your development lifecycle. This is not about academic theory; it's about preventing the catastrophic post-launch failures that happen when a product is technically perfect on the engineering bench but useless in the field.

In this guide, you’ll find:

- A clear decision framework for distinguishing verification from validation.

- A realistic operating scenario showing how to apply V&V under project constraints.

- A practical checklist to ensure your V&V strategy is robust and actionable.

The Critical Difference: Decision vs. Criteria

For leaders managing program risk, the distinction between verification and validation is the line between a smooth production ramp and a crisis. Getting this wrong means shipping a product that, while conforming to its blueprint, fails to solve the user's actual problem. This is a monumental waste of engineering resources and a direct path to market failure.

Verification vs. Validation: A Decision Framework

Let's move past textbook definitions. While both are essential quality processes, they serve different masters and answer fundamentally different questions. In regulated industries like medical devices, fumbling this distinction is a primary driver of recalls.

This table frames the difference as a set of choices and criteria.

| Attribute | Design Verification | Design Validation |

|---|---|---|

| Core Question | Did we build the product right? | Did we build the right product? |

| Compared Against | Design inputs: specifications, requirements, technical drawings. | User needs: intended use, real-world conditions, user expectations. |

| Typical Activities | Design reviews, simulations, analysis, unit tests, inspections. | Usability testing, clinical trials, field testing, beta programs. |

| Timing | Happens during development, often before a full prototype exists. | Happens after development on a production-intent or final product. |

| Goal | To find and fix defects and ensure conformance to specs. | To ensure the product is effective and solves the user’s problem. |

Key Insight: Verification is an objective, inward-facing process focused on meeting predetermined technical requirements. Validation is a subjective, outward-facing process focused on satisfying the end-user's actual needs in their environment. A product can pass every verification test and still fail validation completely.

This isn’t just an academic exercise; it has immediate business implications. A product that is perfectly verified but poorly validated is a solution in search of a problem. On the other hand, rushing to validate an idea without proper verification leads to unreliable, buggy products that destroy customer trust and bury your team in rework. To deliver successful electronic products, you have to master both.

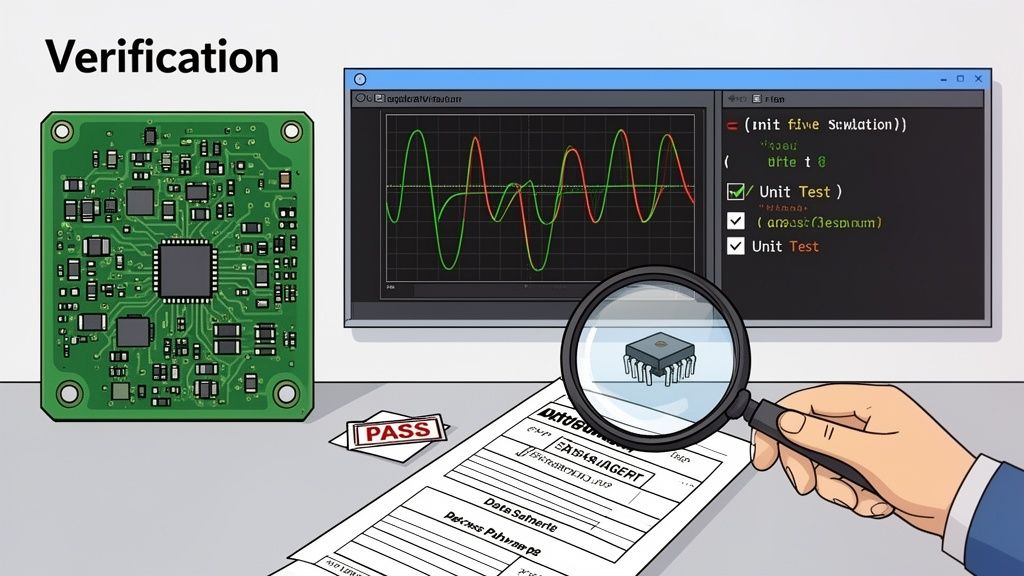

Design Verification: Did You Build the Product Right?

Design verification is the rigorous, engineering-focused process of confirming your product meets all its specified requirements. It's a discipline that looks inward, asking one critical question: Did we build this correctly according to our own blueprint?

The goal is to hunt down and eliminate defects as early as possible—ideally on paper or in simulation—before they get baked into expensive physical prototypes or, worse, find their way into products shipped to customers.

This process is about objective evidence. You’re systematically confirming that design outputs—like schematics, layout files, and firmware—perfectly match the design inputs defined in your requirements documents.

Core Activities in Verification

Verification isn't a single event. It’s a collection of activities woven throughout the design phase, focused on analysis and inspection.

Key verification activities include:

- Design Reviews: Formal peer reviews where engineers scrutinize schematics, PCB layouts, and software architecture to catch errors in logic or implementation before fabrication.

- Analysis and Simulation: Using software tools to predict performance and spot problems computationally. This includes SPICE for circuit simulation, Finite Element Analysis (FEA) for mechanical stress, or Signal Integrity (SI) analysis for high-speed digital designs.

- Static Code Analysis: Automated tools inspect firmware source code without running it, hunting for potential bugs, security vulnerabilities, and violations of coding standards.

- Unit and Integration Testing: For firmware, unit tests check that individual functions behave as specified, while integration tests ensure different software modules interact correctly.

Operating Scenario: Hardware Verification Under Pressure

Context: A team is developing a battery-powered IoT sensor that must last five years in the field. The project is already two weeks behind schedule. The product requirements document (PRD) is clear: the device must have a maximum sleep current of 50 microamps (µA).

During the schematic design phase, the lead engineer performs a critical verification activity: a manual power budget analysis. They meticulously sum the datasheet sleep currents for every component—the microcontroller, radio, sensor, and even passive component leakage.

The initial calculation comes back at 75 µA. That's a 50% overage that guarantees the product will fail its core battery life requirement in the field, leading to costly and reputation-damaging failures.

Sheridan Perspective: This is a classic verification win that demonstrates our philosophy of early risk reduction. The defect was found on paper, long before a single PCB was ordered. The cost to fix it is minimal—a few hours of engineering time to select a lower-power component. If this had slipped past verification, it would have been discovered during DVT power testing, forcing a costly hardware revision and setting the project schedule back by weeks. This is the discipline that prevents late-stage chaos.

Effective verification is foundational for a smooth handoff to manufacturing. For those in highly regulated fields, understanding the full V&V scope is crucial; you can learn more about this in our guide to medical device verification and validation. By focusing on "building the product right," verification lays the groundwork for the next critical phase: ensuring you built the "right product."

Design Validation: Did You Build The Right Product?

While verification confirms your product meets its technical specifications, design validation answers a far more critical business question: Did you build the right product? This process shifts the focus from internal blueprints to the external world, proving the product actually solves the user's problem in its intended operating environment.

Validation isn't about double-checking schematics. It’s about putting the final, integrated product through its paces under real or simulated use conditions. The goal is to generate objective evidence that the product is effective, safe, and reliable for the end-user. It's the ultimate reality check against user needs, not just engineering specs.

Core Validation Methods

Validation involves a battery of tests designed to expose the product to the stresses and scenarios it will face after launch. These activities are typically performed on production-intent hardware from the Design Validation Test (DVT) phase.

Common validation activities include:

- Field Testing and Beta Programs: Deploying the product with a select group of actual end-users to gather unfiltered feedback on performance and usability in their natural environment.

- Usability Studies: Observing users as they interact with the product to pinpoint design flaws or confusing interfaces. A key part of this is often accomplished through formal User Acceptance Testing (UAT).

- Environmental and Stress Testing: Pushing the product to its specified limits—temperature extremes, humidity, water ingress (IP rating), vibration, and shock—to ensure it survives and functions reliably.

- Regulatory and Compliance Testing: Performing formal tests required for certifications like FCC, UL, or other standards specific to your industry.

Operating Scenario: Product Validation in a Harsh Environment

Context: A team is developing a ruggedized agricultural sensor for monitoring soil moisture in large-scale commercial farming. The PRD specifies an IP67 rating (dust-tight and water-resistant) and the ability to withstand the constant vibration of heavy farm machinery. The company has a reputation for reliability to uphold.

The verification process was flawless. The schematics passed every review, and the bring-up of the initial EVT prototypes went smoothly. By all internal measures, the team "built the product right."

Now, it’s time for validation. The DVT units, which are functionally identical to the final product, are put through a rigorous validation plan derived from user requirements and a Failure Modes and Effects Analysis (FMEA).

Sheridan Perspective: This is where our prototype-to-production thinking pays off. Verification confirmed the circuit works on the bench, but validation proves the product works in the field. The team doesn't just dunk it in a bucket; they send it to a certified lab for formal IP67 testing. They mount it on a shaker table to simulate years of rattling on a tractor, all while monitoring data output for stability.

During vibration testing, the team makes a critical discovery. After 100 hours, 15% of the units start experiencing intermittent data dropouts. The root cause is a connector that, while perfectly fine on a quiet lab bench, couldn't handle sustained, high-frequency vibration. This failure would have been catastrophic if first discovered by customers in the field, leading to warranty claims and damaging the company's brand. This is the core of our philosophy: stress the design early to find failures before your customer does.

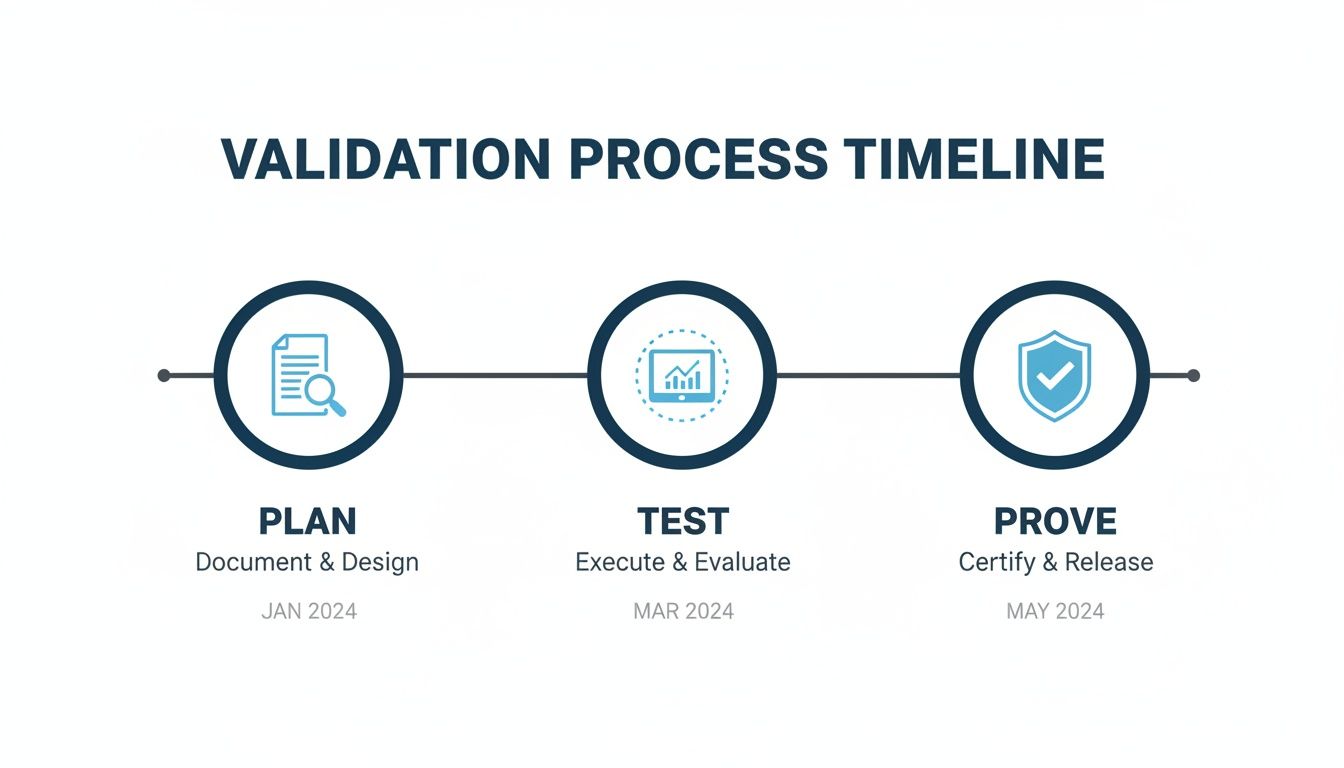

Integrating V&V Into Your Product Development Lifecycle

Too many teams treat verification and validation as a final checklist. This is a massive, costly mistake. When V&V is just a gate at the end of a phase, you’re not de-risking the program; you’re just documenting a failure right before it becomes exponentially more expensive to fix.

Effective V&V isn’t a final exam. It’s a continuous, overlapping set of disciplines woven into your development process. At Sheridan Technologies, we see the entire product lifecycle as a systematic de-risking exercise. The focus of your V&V efforts must shift as the product matures, ensuring you apply the right rigor at the right time.

As the timeline shows, real validation is a campaign. It starts with a solid plan, moves through methodical testing, and ends with objective proof that you’ve built what the customer actually needs.

Mapping V&V to Development Phases (EVT, DVT, PVT)

The standard Engineering Validation Test (EVT), Design Validation Test (DVT), and Production Validation Test (PVT) builds provide a perfect framework for this phased approach.

During EVT, your focus is almost entirely on verification. You’re working with the first prototypes. The goal is to answer fundamental questions: Do the power rails come up correctly? Does the microcontroller boot? This is all about proving subsystems work according to technical specifications.

By DVT, the focus shifts decisively to validation. You’re now using production-intent hardware, and the mission is to prove the product meets all user requirements in real-world conditions. This is where you run the gauntlet of environmental chambers, drop tests, full regulatory compliance suites, and beta tests with end-users.

Finally, PVT is about validating the manufacturing process. Can your factory build the product you validated at DVT, consistently and at scale? For a deeper look at these builds, check out our complete guide on the product development lifecycle stages.

V&V Activities Mapped to Development Phases

This table breaks down how the focus and specific tasks for verification and validation should evolve across the main hardware builds.

| Phase | Primary Focus | Example Verification Activities | Example Validation Activities |

|---|---|---|---|

| EVT | Verification-heavy. Confirming core functionality on early prototypes. | – PCB Bring-up: Checking power, clocks, boot chains. – Functional Tests: Verifying basic I/O operation. – Firmware Unit Tests: Confirming software modules meet specs. |

– Early User Feedback: Informal usability checks on key features. – Core Functionality Checks: Does it perform its primary function at a basic level? |

| DVT | Validation-heavy. Proving the production-intent design is robust, reliable, and compliant. | – Full Regression Testing: Re-running all verification tests on the new design. – Code Reviews: Final checks on firmware for stability. |

– Environmental Testing: Temperature, humidity, shock, vibration. – Regulatory Testing: FCC, CE, UL, etc. – Full Usability & Field Testing: Beta programs with real end-users. |

| PVT | Process Validation. Ensuring the manufacturing process is stable and repeatable. | – First Article Inspection (FAI): Verifying that pilot run units meet all specs. – Test Fixture Verification: Confirming manufacturing test jigs are accurate. |

– Out-of-Box Experience (OOBE): Validating packaging, instructions, and initial setup. – Process Capability Analysis: Confirming sustained quality and yield. |

Sheridan Perspective: The handoff from a verification-focused EVT to a validation-focused DVT is one of the most critical risk points in hardware development. If your verification in EVT was sloppy, DVT will get completely derailed by basic functional bugs. The time and budget you allocated for crucial reliability testing will be burned just trying to get the damn thing to work.

This is a core tenet of our operational philosophy: verification discipline before downstream failure. By structuring your V&V plan around these distinct phases, you build a repeatable process that catches defects early and clears the path for a predictable production ramp. You can see a similar model in how modern CI/CD pipelines operate, where automated tests (verification) run constantly before controlled user exposure (validation).

Common Pitfalls and How to Avoid Them

Even sharp engineering teams can stumble into expensive verification and validation traps. Knowing the warning signs is the first step to avoiding a program rescue mission down the road.

Pitfall 1: Untestable Requirements

The most frequent point of failure starts with requirements that are ambiguous, subjective, or lumped together, making them impossible to prove with objective evidence.

- Warning Sign: Your requirements document is littered with words like "user-friendly," "robust," or "fast" without any hard numbers attached.

- Sheridan Recommendation: Break down every requirement until it is atomic, specific, and directly testable. Instead of "user-friendly," write "A first-time user must complete setup in under 3 minutes with a 95% success rate." This gives your test team unambiguous, pass/fail criteria.

Pitfall 2: V&V as a Final Checklist

This is seeing verification and validation as the last gate to rush through before production, turning V&V into a reactive quality check instead of a proactive, risk-reducing discipline.

- Warning Sign: Test protocols are being designed at the same time as the DVT build. The question, "How are we going to test this?" is being asked for the first time on production-intent hardware.

- Sheridan Recommendation: Draft the V&V plan alongside the requirements document. Treat testability as a critical design feature from day one. This forces you to design in the necessary test points and firmware hooks from the beginning, transforming testing from an afterthought into a core engineering activity.

Pitfall 3: Insufficient Test Coverage Based on Risk

Teams under pressure often fall back on "happy path" testing, proving the product works under ideal conditions. This leaves huge holes in coverage for edge cases and fault conditions—exactly where products fail in the real world.

- Warning Sign: The test plan is just a list of features with no clear connection to a risk analysis document like an FMEA. All tests are treated as equally important.

- Sheridan Recommendation: Your risk analysis must drive your test plan. High-risk failure modes demand more rigorous and exhaustive test cases. This ensures your limited testing resources are aimed squarely at the threats that pose the greatest danger to your product's success and your users' safety.

Practical Checklist: What to Do on Monday Morning

The gap between a V&V strategy and disciplined execution is where projects fall apart. This checklist is a practical tool for engineering leaders to enforce accountability and drive a project from prototype to production with data-backed confidence.

Planning Phase

- [ ] Requirements Clarity: Are all requirements atomic, unambiguous, and testable?

- [ ] Testability by Design: Was the V&V plan finalized before the DVT design freeze?

- [ ] Risk-Driven Focus: Does your test plan map directly to your FMEA or risk analysis?

- [ ] Resource Allocation: Have you secured the budget, equipment, and personnel for the entire V&V campaign?

Execution Phase

- [ ] Traceability: Is every test protocol explicitly linked back to a specific requirement?

- [ ] Objective Evidence: Are all test results—especially failures—meticulously documented with hard data, logs, and photos? This is critical when you need to test a printed circuit board.

- [ ] Failure Analysis: Is there a formal process for root cause analysis of every failure?

Review and Release Phase

- [ ] Complete Traceability Matrix: Does the final V&V summary report show a definitive pass/fail status for every single requirement?

- [ ] Validation Confirmed: Has the product been properly validated with real end-users in its intended environment?

- [ ] All Issues Closed: Are all high- and medium-priority bugs and test failures fully resolved and their fixes verified?

A "pass" with missing evidence is just as worthless as a "fail" without a root cause analysis. The entire point is to generate a high-integrity, auditable data set that proves the product is ready.

Frequently Asked Questions

Even with clear definitions, thorny issues pop up during project execution. Let’s tackle a few common questions we hear from teams in fast-paced or regulated fields.

Can a Product Pass Verification but Fail Validation?

Absolutely. This is a classic and expensive project failure. It happens when a product meets every technical requirement (verification) but completely misses the mark with users (validation). Think of a medical device with an alarm verified to produce an 85 dB tone. If that frequency is hard for older users to hear in a noisy hospital, the product has failed validation. It’s not effective in its real-world context.

How Do Agile Methodologies Handle V&V?

Agile doesn’t get rid of V&V; it breaks it into smaller, more frequent cycles.

- Verification: Happens constantly within a sprint via unit tests, static code analysis, and peer reviews.

- Validation: Happens at the end of a sprint, typically through demos with product owners or direct feedback on the latest shippable increment.

This iterative rhythm prevents V&V from becoming a monolithic bottleneck.

What Is the Relationship Between V&V and Regulatory Compliance?

In regulated industries like medical devices (FDA 21 CFR 820) or aerospace (DO-178C), V&V isn’t just a best practice—it's a legal mandate. Regulatory bodies require objective, documented evidence that your V&V activities were planned, executed, and recorded. This documentation, often in a traceability matrix, is your proof of diligence. This critical market need is fueling massive growth, with the product design V&V solutions market projected to hit USD 14.9 billion by 2035. You can read the full research on the V&V market from Future Market Insights to dig deeper.

At Sheridan Technologies, we build rigorous V&V plans into every program, ensuring your product is not only technically sound but also ready for real-world success. If your V&V strategy has gaps, let our experts help you de-risk your program. Request a V&V Plan Review today.