Table of Contents

For engineering and operations leaders in complex product development, the goals are clear: compress time-to-market, de-risk production ramp, and improve in-field reliability. Digital twinning in manufacturing has evolved from an academic concept into a mission-critical operational tool for hitting these targets. When implemented with discipline, a digital twin can solve stubborn problems in new product introduction (NPI), manufacturing yield, and system verification that plague even the most experienced teams.

This guide is for technical leaders—VPs of Engineering, CTOs, Program Managers, and Manufacturing leads—who need to move beyond the hype and understand how to deploy digital twins to solve concrete operational and development challenges. This is not about building a photorealistic factory replica; it’s about using focused, data-driven models to make better decisions under pressure. We will cover:

- Decision Framing: What a digital twin is (and isn’t) in a practical manufacturing context.

- Implementation Strategy: How to plan and execute a digital twin program using a phased “Crawl, Walk, Run” approach.

- Risk Mitigation: Common failure modes that derail these initiatives and how to avoid them.

What Is a Digital Twin in a Manufacturing Context?

For a VP of Engineering or Manufacturing Lead, a digital twin must be more than a 3D model. In a practical setting, it is a dynamic, high-fidelity virtual replica of a physical asset, process, or system that is continuously updated with real-world operational data. This creates a closed-loop feedback system between the physical and digital worlds, enabling analysis, prediction, and optimization that would be too slow, costly, or risky to perform on live hardware. The market reflects this shift from experiment to essential tool, with projections showing the manufacturing digital twin market growing from $28.91 billion in 2025 to $328.29 billion by 2030, according to industry research.

This guide frames the digital twin as a strategic asset for solving tangible development and production challenges, from early-stage verification to full-scale manufacturing.

From Static Model to Living System

A digital twin bridges the physical and digital worlds, allowing you to run analyses, predict outcomes, and optimize processes in a virtual environment. This is especially vital in high-stakes industries like aerospace, defense, and medical devices, where Sheridan Technologies delivers end-to-end hardware and firmware integration.

An effective digital twin is built on three pillars:

- A High-Fidelity Model: The virtual representation of the asset—a single robotic arm, a CNC machine, or a complete assembly line. Its level of detail must befit the specific problem you need to solve.

- A Live Data Connection: The heartbeat of the twin. Sensors on the physical asset continuously feed real-time operational data—temperature, vibration, cycle times, state information—to the virtual model.

- Analytics and Simulation: The twin uses this live data to simulate future states, predict potential failures, and test optimization scenarios without disrupting live production.

The core value is this: A digital twin lets you ask complex “what-if” questions about your physical operations and get data-driven answers in near-real time. This drastically de-risks critical decisions, from early EVT builds all the way to full-scale production.

This capability is about building a safe, virtual testbed for achieving operational excellence. For example, you can use a twin to validate complex updates to your manufacturing IT services before they go live on the factory floor, preventing costly downtime. By shifting from reactive problem-solving to proactive optimization, engineering leaders can build a powerful and lasting competitive advantage.

Understanding the Core Components of a Manufacturing Digital Twin

A digital twin is not a standalone 3D model. For digital twinning in manufacturing to deliver operational value, three components must work in concert: the physical asset on the factory floor, its digital counterpart, and the constant stream of data connecting them. This connection creates the feedback loop for analysis and prediction.

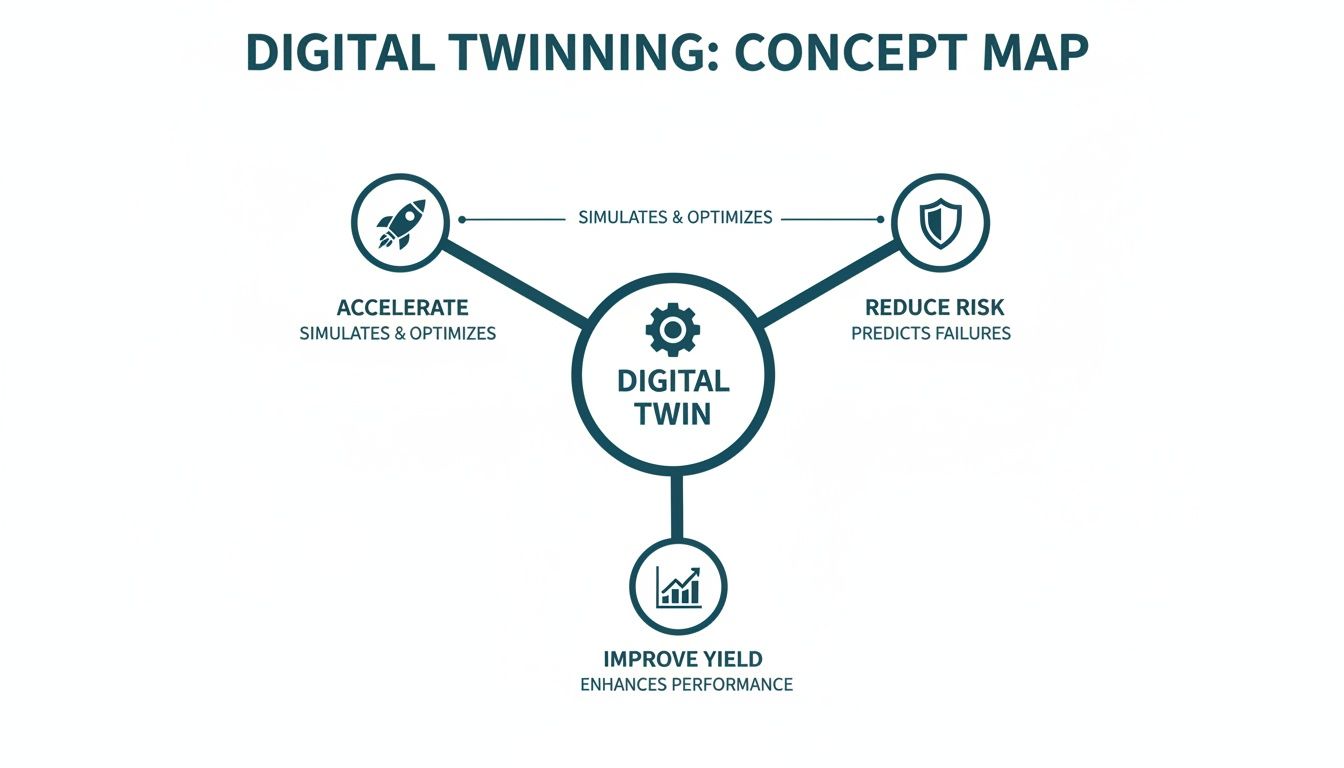

At Sheridan Technologies, we see that the entire structure rests on clean, reliable data. It starts at the embedded systems level. If the hardware and firmware collecting the data aren’t robust and instrumented for testability, you’re feeding garbage into your twin, rendering even the most advanced model useless. This map shows how a digital twin acts as a central hub, translating raw data into tangible business outcomes: accelerated development, reduced risk, and improved yield.

The twin acts as a bridge, turning information into action across the entire product lifecycle.

The Physical to Digital Architecture

The architecture of a manufacturing digital twin connects the shop floor to the software model. It’s a multi-layered system where each layer plays a critical role in ensuring data flows correctly to generate actionable insights.

- The Physical Layer: The factory floor—the machine, robot, or conveyor belt itself, instrumented with sensors measuring physical properties like temperature, vibration, pressure, or vision system output. These sensors and actuators are the twin’s interface to the real world.

- The Communication Layer: Sensor data must be transmitted reliably from the asset to the model. This is typically handled by industrial protocols like MQTT or OPC-UA, designed for machine-to-machine communication in harsh factory environments with intermittent connectivity.

- The Data Ingestion & Processing Layer: Raw sensor data is often noisy and unstructured. This layer, which can reside on an edge server or in the cloud, cleans, normalizes, and aggregates the data. It also enriches the stream with contextual data from historical logs or quality reports, often requiring robust data extraction solutions for manufacturing to pull structured information from disparate sources.

- The Modeling & Simulation Layer: The brain of the operation where the virtual model resides. Processed data continuously updates this model, which is then used to run simulations, perform analytics, or train machine learning algorithms for predictive tasks.

- The Application & Visualization Layer: The user-facing dashboard or interface. This is where engineers, managers, and operators explore the twin, get at-a-glance insights, and run “what-if” scenarios without impacting physical hardware.

Choosing the Right Model Fidelity

Not all digital twins are created equal, nor should they be. The level of detail in your model, or its fidelity, must be tailored to the specific problem you are trying to solve. A model that is too simple may yield inaccurate conclusions, while one that is too complex will be slow, expensive to maintain, and a poor use of resources.

A common failure mode is over-engineering the twin. The objective isn’t to build a photorealistic replica of reality. It’s to build a model that is good enough to support a specific, high-value decision. Aligning model fidelity with the business case is essential for achieving a tangible return on investment.

The right approach depends on the product lifecycle stage and your objectives—whether performing virtual verification during EVT or optimizing yield during PVT. The table below breaks down the primary model types.

Digital Twin Model Types Compared

Choosing the right type of digital twin model is a critical first step. It dictates the scope, complexity, and ultimately the ROI of your project. This table compares the primary approaches to help you match the model to your mission.

| Model Type | Description | Best Use Case | Data Requirement | Pros | Cons |

|---|---|---|---|---|---|

| Component Twin | A model of a single, isolated part, like a motor or a pump. | Predictive maintenance, failure analysis of a specific component. | Low (e.g., vibration, temperature data from one asset). | Fast to build, low computational cost, quick ROI. | Limited scope; cannot analyze system-level interactions. |

| Asset Twin | A model of a complete piece of equipment, like a CNC machine or a robotic arm. | Performance optimization, throughput analysis for a single machine. | Medium (data from multiple sensors on one asset). | Provides a holistic view of a single asset’s health and efficiency. | Does not account for upstream or downstream process dependencies. |

| Process/Line Twin | A model of an entire production line or a series of interconnected processes. | Identifying bottlenecks, simulating workflow changes, virtual commissioning. | High (data from many assets, MES, and ERP systems). | Allows for system-level optimization and “what-if” analysis. | Complex to build, high data integration effort, requires more resources. |

| System Twin | A model of the entire factory or supply chain, a “system of systems.” | Strategic planning, supply chain resilience, factory layout optimization. | Very High (data from all operations, suppliers, and logistics). | Enables holistic, strategic decision-making across the entire value chain. | Very high complexity, significant investment, long implementation time. |

These models can be seen as building blocks. Many successful digital twin strategies begin with a high-value component or asset twin to prove ROI, then scale to a more complex process or system twin over time. The key is to start with a clear, narrowly defined objective.

How Digital Twins Drive Real-World Business Outcomes

A digital twin program that doesn’t deliver measurable business impact is an expensive R&D project. For VPs of Engineering and Operations, the value of digital twinning in manufacturing is measured by its direct impact on cost, speed, and risk. A successful twin isn’t just an engineering model; it’s a strategic asset that pays for itself by reducing inefficiency and waste.

Slash Time to Market

In hardware development, speed is a competitive advantage. Digital twins create a virtual sandbox for verification and validation to occur in parallel with physical prototyping, radically compressing development timelines.

- Virtual Commissioning: Fully testing and debugging PLC and robotics code in a simulation before hardware reaches the factory floor. This practice cuts on-site deployment time from weeks to days and significantly de-risks production ramp-up.

- Early Design Validation: Running thousands of performance simulations on a virtual prototype to catch deep-seated design flaws in software, firmware, and hardware interactions long before committing to expensive physical prototypes.

- Parallel Workstreams: Enabling firmware and software teams to develop and test against a digital twin of the hardware months before physical boards are fabricated, breaking a common bottleneck in complex electronics projects.

Front-loading verification is a game-changer. Finding a critical integration bug in a simulation during EVT, instead of during a late-stage DVT build, saves immense cost and protects the launch schedule.

Cut Operational Costs and Eliminate Downtime

On the factory floor, a digital twin improves efficiency and reliability. By creating a living model of production assets, teams can transition from a reactive “break-fix” cycle to a proactive, predictive operational posture. This shift directly impacts the bottom line by preventing costly interruptions. For instance, a twin can model and optimize material flow through a complex line—like those found in a sophisticated industrial IoT solution—to dissolve bottlenecks before they form.

One of the most powerful applications is predictive maintenance. By continuously comparing real-time sensor data against a physics-based model, the twin can forecast component failures with high accuracy, allowing maintenance to be scheduled precisely when needed, preventing catastrophic failures that shut down the line.

Quantifying the Return on Investment

A business case for a digital twin must be built on concrete metrics. Industry benchmarks and real-world deployments show a compelling ROI, with some manufacturers reporting up to a 50% reduction in development time and significant efficiency gains in production. For example, Unilever’s AI-powered digital twin, deployed across over 300 factories, is projected to save $2.8 million annually on top of 1-3% productivity gains. This is the type of large-scale optimization that Sheridan Technologies’ clients in robotics and industrial automation are pursuing.

To build your business case, focus on the metrics that matter to leadership.

Key Metrics for Your Business Case:

| Category | Metric | How a Digital Twin Helps |

|---|---|---|

| Development | Reduction in Physical Prototypes | Enables exhaustive virtual testing, cutting the number of expensive hardware spins required. |

| Production | Overall Equipment Effectiveness (OEE) | Optimizes asset utilization and minimizes downtime through predictive maintenance and process simulation. |

| Quality | First Pass Yield (FPY) | Simulates process parameters to find the optimal settings that reduce defects from the start. |

| Operations | Mean Time to Repair (MTTR) | Pinpoints the root cause of failures in minutes, not hours, by replaying historical data in the twin. |

When you align your digital twin initiative with these core business outcomes, it becomes a strategic investment that drives undeniable value across the organization.

Where the Rubber Meets the Road: Practical Digital Twin Applications

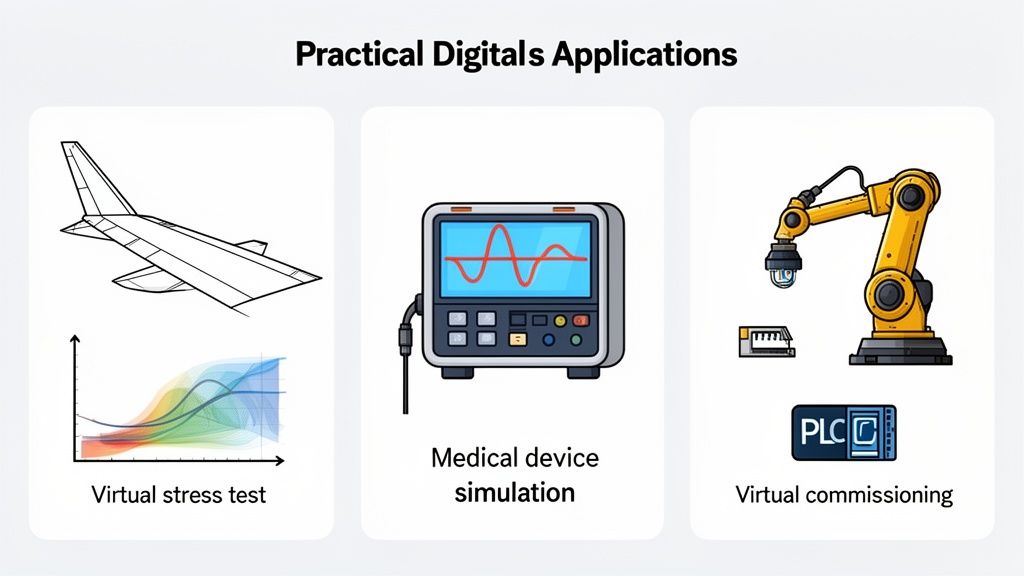

While high-level outcomes are compelling, the true value of digital twins becomes clear when applied to specific, high-stakes engineering problems. For leaders in aerospace, medical devices, and industrial automation, abstract benefits are secondary to solving the unforgiving challenges inherent in industries where failure is not an option.

A digital twin serves as a virtual proving ground, enabling testing, validation, and optimization in a digital environment before committing resources to a physical build. This is where a “design for testability” mindset pays dividends—building test hooks into hardware and firmware from day one enables a far more powerful and accurate digital twin later.

Realistic Operating Scenario: Firmware Verification for a Medical Infusion Pump

The Challenge: Your team is developing a new, network-connected infusion pump. The firmware is safety-critical, and the V&V process is a major schedule risk. Timeline pressure is high, with a hard deadline for regulatory submission. A late-stage bug found during physical testing could delay the launch by six months.

The Solution: You implement a digital twin of the pump, modeling its mechanical components, fluid dynamics, and sensor inputs. This twin runs the actual production firmware in a hardware-in-the-loop (HIL) simulation.

This allows your team to:

- Simulate Physiological Extremes: Test firmware response to varying fluid viscosities, unexpected back pressures, and difficult bubble detection scenarios that are hard to replicate consistently on a bench.

- Run Millions of Cycles: Virtually test the device for millions of operational cycles to uncover rare, intermittent bugs in firmware logic that would likely be missed in limited physical testing.

- Inject Faults Safely: Introduce sensor failures or motor stalls in the virtual model to confirm the firmware’s safety-critical fault handling routines perform exactly as specified.

The Outcome: This virtual V&V process generates a massive volume of objective evidence to support regulatory submissions. It demonstrates a level of testing rigor that far exceeds what is achievable with benchtop tests alone, directly supporting compliance with stringent FDA and ISO requirements and de-risking the product launch schedule.

Aerospace Component Lifecycle Management

In aerospace, where reliability is paramount, digital twins are becoming essential for managing the lifecycle of critical components. They integrate directly into formal risk management processes like FMEA/FMECA, from design validation to in-service health monitoring. An aerospace digital twin enables virtual stress testing, where simulated loads, thermal cycles, and vibrations are applied to a high-fidelity model to predict fatigue life and identify potential failure modes early in the design phase.

Once a component is in service, real-world data from onboard sensors continuously feeds its twin. This allows the model to track the actual wear and tear on its physical counterpart, accurately predicting when maintenance is required and moving from a scheduled to a condition-based maintenance paradigm.

Virtual Commissioning for Industrial Automation

One of the most immediate and high-impact applications of digital twins is in industrial automation, specifically virtual commissioning. The traditional process of installing and commissioning a new robotic cell or automated line is notoriously risky, often causing costly delays. Digital twins are already being used to design and optimize complex systems like automated storage and retrieval systems (ASRS) for smart warehouse automation, and commissioning is the next frontier.

A digital twin of the entire production cell—including robots, conveyors, PLCs, and safety systems—changes the game. Long before physical equipment is delivered, your automation engineers can:

- Fully Test Control Code: Load the actual PLC and robot control code into the virtual environment and debug it completely offline.

- Optimize Cycle Times: Run the simulated production line to identify bottlenecks and fine-tune robot paths for maximum throughput.

- Train Operators: Allow operators to train on the virtual cell in a safe, simulated environment, ensuring they are productive and confident from day one.

This approach transforms on-site installation from a long, unpredictable process into a smooth, almost “plug-and-play” activity. By the time the physical cell is bolted to the floor, the control logic is already 95% validated, drastically cutting commissioning time and risk. By 2026, digital twins are expected to be standard practice for predictive maintenance, with 34% of users improving product quality, 30% cutting manufacturing costs, and 28% slashing unplanned downtime.

A Phased Roadmap to Implement Your First Digital Twin

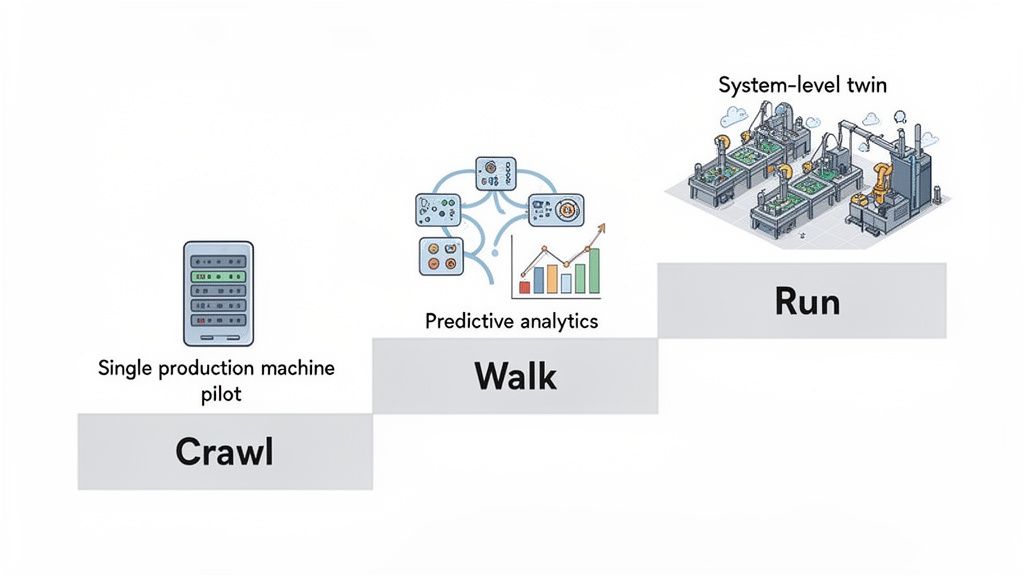

Attempting to build a full-factory digital twin from scratch is a common mistake, especially for resource-constrained engineering teams. This approach often leads to budget overruns and a lack of demonstrable results. A more disciplined path to adopting digital twinning in manufacturing is the “Crawl, Walk, Run” method. This program execution model focuses on starting small, proving value quickly, and building momentum.

The Crawl Stage: Your First Win

The objective here is simple: pick one painful, high-impact problem and solve it. This stage is not about building a perfect, futuristic twin; it’s about achieving a tangible win that can be translated into a clear financial or operational metric.

Start with a single, critical asset. Identify the machine that is a constant source of problems—the primary bottleneck, the station with poor yield, or equipment that fails unpredictably.

Key Activities for the Crawl Stage:

- Laser-Focus the Problem: Be specific. Instead of “improve uptime,” aim to “Reduce unplanned downtime on CNC-03 by 15% in Q2.”

- Identify Minimum Viable Data: Determine the absolute minimum data required to solve the problem. For a motor, this might be just vibration and temperature. Keep it simple.

- Build a Foundational Model: Construct a basic twin focused only on that one problem.

The outcome is a focused, functional twin and a powerful ROI story: “By investing X hours and Y dollars, we prevented two shutdowns and saved Z in lost production.” This narrative gains executive attention.

The Walk Stage: Gaining Intelligence

With a successful pilot, you’ve earned the credibility to expand. The “Walk” stage involves applying the lessons from your first win to a broader scope, making your initial twin smarter and beginning to connect it to adjacent systems.

This is the time to integrate more data streams for context, such as pulling data from your Manufacturing Execution System (MES) to correlate machine performance with specific work orders or material batches. This is also where predictive analytics can be introduced.

The Walk phase is where the twin evolves from a reactive monitoring tool into a proactive, predictive asset. It begins to answer not just “What is happening now?” but “What is likely to happen next?”

This stage builds critical confidence across the organization. As you explore broader digital transformation solutions, the tangible successes from your twin program become a powerful internal case study.

The Run Stage: Scaling Across the System

The “Run” stage is where you capitalize on proven success. Having validated the model on a single asset and then expanded its intelligence, you can now connect multiple twins to create a true system-level view—a twin of an entire production line or a network of interconnected assets. At this level, the digital twin begins to function as a central nervous system for your operations.

Key Activities for the Run Stage:

- System-Level Integration: Connect individual asset twins to model an entire process from start to finish.

- Closed-Loop Control: Empower the twin to send commands back to the physical world, automatically optimizing parameters in real time.

- Enterprise System Integration: Link the twin to ERP and PLM systems for a complete, end-to-end view of the value chain.

The Full Roadmap at a Glance

This methodical progression transforms the daunting task of digital twin implementation into a manageable program. Each phase builds on the last, ensuring every step is grounded in proven value and organizational buy-in.

| Digital Twin Implementation Roadmap |

| :— | :— | :— | :— |

| Phase | Objective | Key Activities | Expected Outcome |

| Phase 1: Crawl | Prove concept value with a single, high-impact use case. | Identify one critical asset. Define a clear business problem. Build a basic, focused model. | A functional pilot twin with a clear, demonstrable ROI. Early buy-in secured. |

| Phase 2: Walk | Expand the twin’s intelligence and scope. | Integrate more data sources (e.g., MES). Add predictive analytics. Connect to adjacent systems. | A more sophisticated twin that provides predictive insights, not just monitoring. |

| Phase 3: Run | Scale to a system-level view and enable automation. | Connect multiple asset twins. Implement closed-loop controls. Integrate with enterprise systems (ERP, PLM). | A fully integrated, system-level twin that actively optimizes the production process. |

By following this “Crawl, Walk, Run” framework, you de-risk the entire initiative and build a solid foundation for enterprise-wide adoption.

Common Pitfalls That Derail Digital Twin Programs

Many promising digital twin initiatives fail for predictable and preventable reasons. Success rarely hinges on the software alone; it depends on the strategy, planning, and operational discipline behind the technology. Understanding these common failure modes is a critical risk management step.

Starting With a Vague or Overly Ambitious Scope

The most common mistake is starting without a crystal-clear, narrowly defined business problem. A goal like “improve efficiency” is a wish, not a plan. Without a specific, measurable target, the project will drift, making it impossible to demonstrate a clear return on investment. This ambiguity fuels scope creep, and soon the team is trying to build a perfect, all-encompassing factory twin—an effort doomed to fail.

A warning sign is a project charter that uses broad terms like “optimize production” without specifying which asset, what metric, and by how much.

The Fix: Force the team to answer one question: “What is the single business metric we are trying to move?” Start with a small pilot project focused on a single machine with a known, painful, and expensive problem. A win there builds the momentum and political capital needed for expansion.

Underestimating the Data Integration Challenge

A digital twin is only as good as the data that feeds it. Organizations consistently underestimate the effort required to get clean, reliable, and synchronized data from the factory floor into the virtual model. Data often lives in siloed systems—PLCs, MES, ERP, and legacy databases—each with its own protocol and format. A digital twin project exposes all the technical debt from years of uncoordinated IT and OT decisions.

The result is a sophisticated model starved of good data, leading to predictions that don’t match reality. When a twin’s output cannot be correlated with real-world outcomes, it signals a data quality or integration failure.

Common data roadblocks include:

- Poor Sensor Data: Uncalibrated, noisy, or low-quality sensors providing unreliable inputs.

- Data Latency: Significant delays in data transmission causing the twin to fall out of sync with its physical counterpart.

- Lack of Context: Raw sensor data is often meaningless without contextual information from systems like the MES.

- Inaccessible Historical Data: Critical performance and maintenance records locked away in unstructured formats or proprietary systems.

The Fix: Treat your data infrastructure as a foundational pillar of the project. This means investing upfront in sensor calibration, data cleansing pipelines, and robust integration middleware, which aligns with Sheridan’s focus on verification discipline.

Failing to Align With Clear Business KPIs

Many digital twin programs fizzle out because they are led by IT or a technology group in isolation, framed as a technology implementation rather than a business-driven initiative. Without direct alignment to the Key Performance Indicators (KPIs) that matter to the COO, CFO, and head of operations, the program will struggle for funding and support.

If you cannot draw a straight line from your twin’s output to a reduction in the Cost of Poor Quality (COPQ), an increase in Overall Equipment Effectiveness (OEE), or a shorter time-to-market, you are setting yourself up to fail. The digital twin must be positioned as a tool that solves tangible financial and operational problems.

Monday Morning Usefulness: Your Next Steps

A successful digital twin program begins with a clear, focused plan. Before engaging vendors or building models, answer these fundamental questions to frame your initiative:

- Identify the Pain: What is the single most painful, costly, and chronic problem in your manufacturing operation or development process right now? Is it a specific machine’s downtime, a line’s low yield, or a bottleneck in your NPI verification process? Be specific.

- Define the Metric: What specific, measurable KPI will you use to define success? Aim for something like, “Reduce unplanned downtime on Assembly Line 3 by 20%” or “Cut the number of physical prototypes for Project X by one.”

- Assess Your Data Readiness: Do you have the necessary sensors on the target asset? Is the data accessible? Who on your team understands the data infrastructure and can lead the integration effort? Acknowledging data gaps early is critical.

- Secure an Executive Sponsor: Who in operations or engineering leadership feels the pain you identified? A successful pilot needs a champion who can protect the project and communicate its value to the rest of the organization.

Answering these questions will provide the foundation for a pragmatic, high-impact pilot project. An experienced engineering partner can also provide the systems integration expertise to accelerate your first project. Sheridan Technologies offers manufacturing readiness assessments to help you pinpoint the highest-impact pilot and build a realistic roadmap for your digital twin implementation. Schedule an assessment with our team.